Montage and Brightness Stretching

Contents

Stretching Astronomical Images

The purpose of this document is to review the various ways data values in images can be stretched to given good screen brightnesses and colors, primarily in the context of the Montage module mViewer. Since displays take 8-bit (0-255) values (or three of these for color), such stretching is a necessity.

Most astronomical images have extreme histograms, with a lot of values at low data values (sometimes background noise but sometimes low-level sky structure) with a few areas (e.g., stars) that are extremely bright. Often, it is the slight proportional differences between these bright areas that is of interest but equally it may be just as important to show the subtle differences in the low level structure.

So stretching cannot readily be automated. Certain formulae will work well for certain types of field, certain datasets, etc. so there are rules of thumb that sometimes make the process more predictable as well as stretching methods that are better behaved than others. We will try to outline these options here.

Each of the sections below includes a set of examples. Each of these can be expanded for more detailed viewing and since many of the effects (and a lot of the science) are subtle, we recommend comparing these at full resolution (e.g., using right-click / open in new window).

Linear Stretch |

Top |

Linear stretching is the simplest transform and usually (though not always) a very bad one. If the data histogram is relatively uniform (e.g., like a velocity map) then a linear stretch where the display values are linearly spaced in data values between some minimum and maximum works well. However, when the distribution of brightnesses has a very long positive tail (a few pixels with very high but very important values) you are faced with two equally unpleasant alternatives: either stretch from minimum to maximum data values or pick a range artificially where everything above the chosen maximum saturates.

A common compromise is to work in percentiles and have the stretch only saturate those pixels above the 99th (or 99.9th or whatever) percentile. While "only" 1% of the pixels are then saturated and the overall result often looks pretty good, that 1% is often where a lot of the science is. The same thing is true at the low end (starting the stretch at the 1% level) where 1% of the pixels then map to "black". [Note: we aren't yet considering choice of color table; terms like black and white refer to using a simple grayscale.]

But basically the linear stretch boils down to choosing a range of values where the linear transform brings out the features we wish to concentrate on, and abandoning the pixels outside that range. The stretches for the images below were adjusted manually to optimize the information content. We don't bother showing min/max stretches as all but a few dozen pixels in each ends up black. In the following sections (but the last) we have similarly adjusted the stretch parameters to make the result as good as possible so the more subtle effects of the stretches can be compared.

|

|

|

Logarthmic Stretch etc. |

Top |

There are a lot of non-linear stretches. Basically, they all try to transform the brightnesses in a way that gives more weight to the values in the tail of the histogram than one gets from linear stretching.

Why is this necessary? When faced with continuous brightness values the eye/brain is good at adapting to the range of input. From the adaptive aperture of the iris to the non-linear response of the retina to the (artificial) intelligence of the (trained) neural network in the brain, we are built to built to be able to see both subtle differences in low-level lighting and detail in bright regions, even in the same scene depending on where we are focussing. When we are required to push this through a 0-255 device like a display screen, we need to reproduce algorithmically as much of this as possible and rely on the eye/brain to interpret the resultant gentle brightness differences as a good representation of the original.

A side note: On top of all the above, at least when using a display device, the screen luminance is not linear with the data values but subject to a power law "gamma" correction.

Since the 1930s, it has been known that the eye responds roughly logarithmically to light intensity (square root at very low light levels and with saturation effects for very bright light) over a brightness factor range of around 10 billion. Astronomical data frequently covers similarly large ranges, with bright sources and low level structure in the same field often many orders of magnitude in brightness value apart. So a logarithmic transform is both a good analog to the eye response and matched fairly well to the long positive tail of a statistical distribution function.

But it still isn't perfect. The normal approach is to choose minimum and maximum values to tie the logarithmic shape to. So while the range between these usually looks better (generally more lower-level bins without ignoring structure at the brighter end), you still have some saturated pixels or you stretch it so high that low-level structure is lost. Accentuating the shape of the curve (e.g., log(log(brightness))) is possible but usually doesn't add much.

|

|

|

Asinh Stretch |

Top |

The Sloan Digital Sky Survey (SDSS) proposed an alternate magnitude scale (Lupton, Gunn & Szalay (1999)) based on the hyperbolic sine function. This magnitude scale is characterized by an softening parameter (a value down near the noise level in the data). The net effect is a scale that behaves like a classical magnitude at bright values but is better behaved at low (and even negative) data values.

Extending this idea to image stretching, we can apply an asinh() transform to the data. This will be logarithmic at high values but gives us more control (via the softening parameter) over the transformation of low level structure.

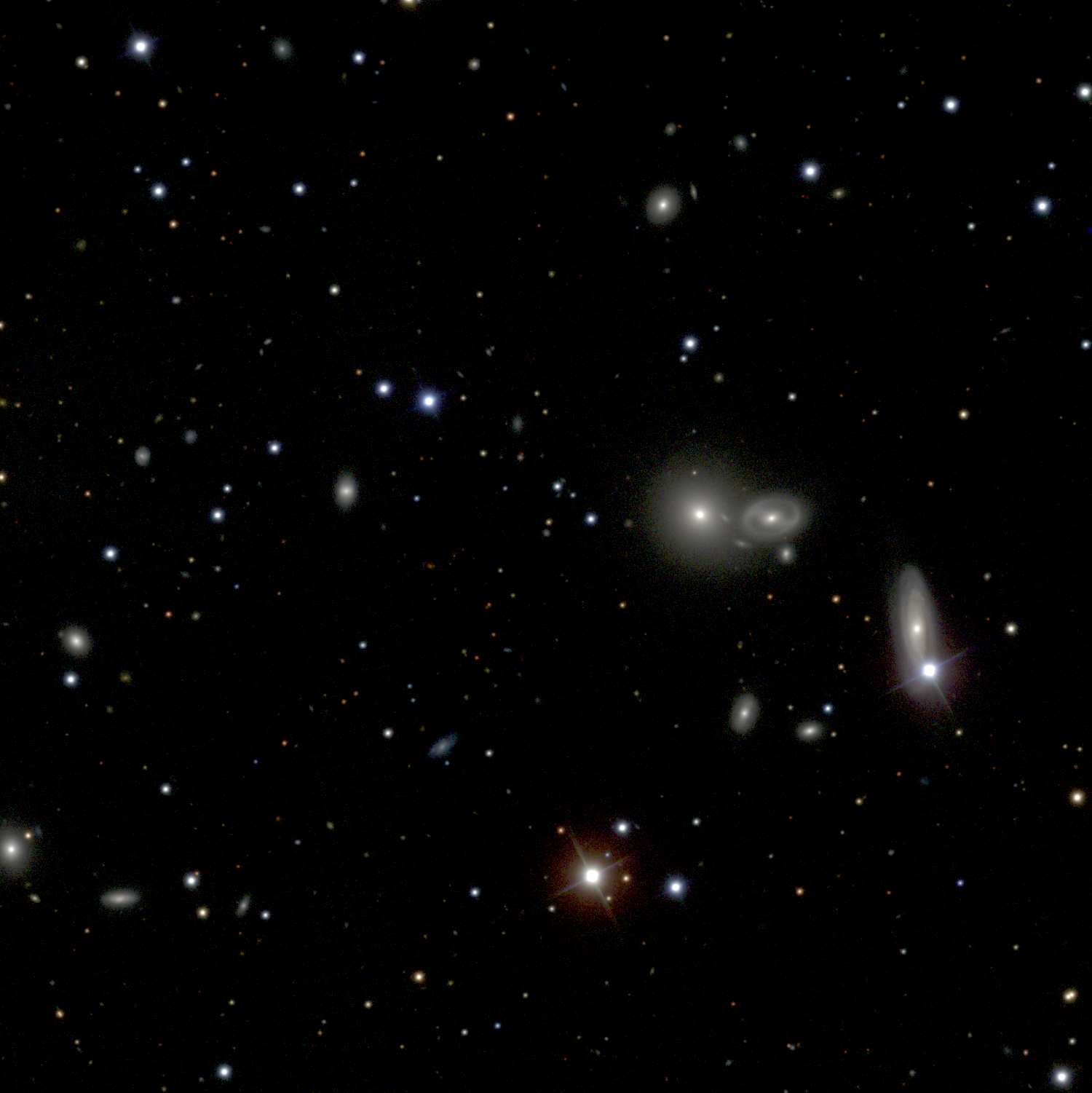

However, with greater control comes greater responsibility: Now we need to pick both stretch ranges as before and a value for the softening parameter. But that having been said, the asinh() stretch is often very good for picking out detail in many images, particularly those like SDSS with galaxies on a (mostly) black background.

|

|

|

A Note on Color |

Top |

When creating a color composite, normally three images are stretched independently and mapped to red, green, and blue planes in the composite. This is what mViewer and most other astronomical software does.

There are some variations on this that aren't available through mViewer but worth noting. It is possible to map more than three images into the final result by mapping them individually into some odd color composite and then merging the results. As a simple example, you could have three images that were mapped to red, green and blue and a fourth (most likely whose wavelength was between the red and green input images wavelength) that was mapped to a range of "yellow" intensities. Then merging the red, yellow, green, and blue would give a color image showing effects of all four. This sort of thing is often done in programs like Photoshop (or old-school using glass filters in real color photo processing).

Color is particulary sensitive to the gamma correction mentioned above. This has been explored in detail by Emmanuel Bertin in the documentation for his STIFF software package.

|

|

|

Adaptive Stretch |

Top |

The best known "adaptive" transform for image brightness is histogram equalization. Essentially, the histogram is flattened, so there are equal numbers of pixels with brightness 0, 1, 2 ... 255. This is simple enough if you have a fine enough histogram.

In general histogram equalization tends to wash out an image and with astronomical data this is particularly bad. Since so few pixels in terms of total count are very bright, they often much all end up in the same 255 bin.

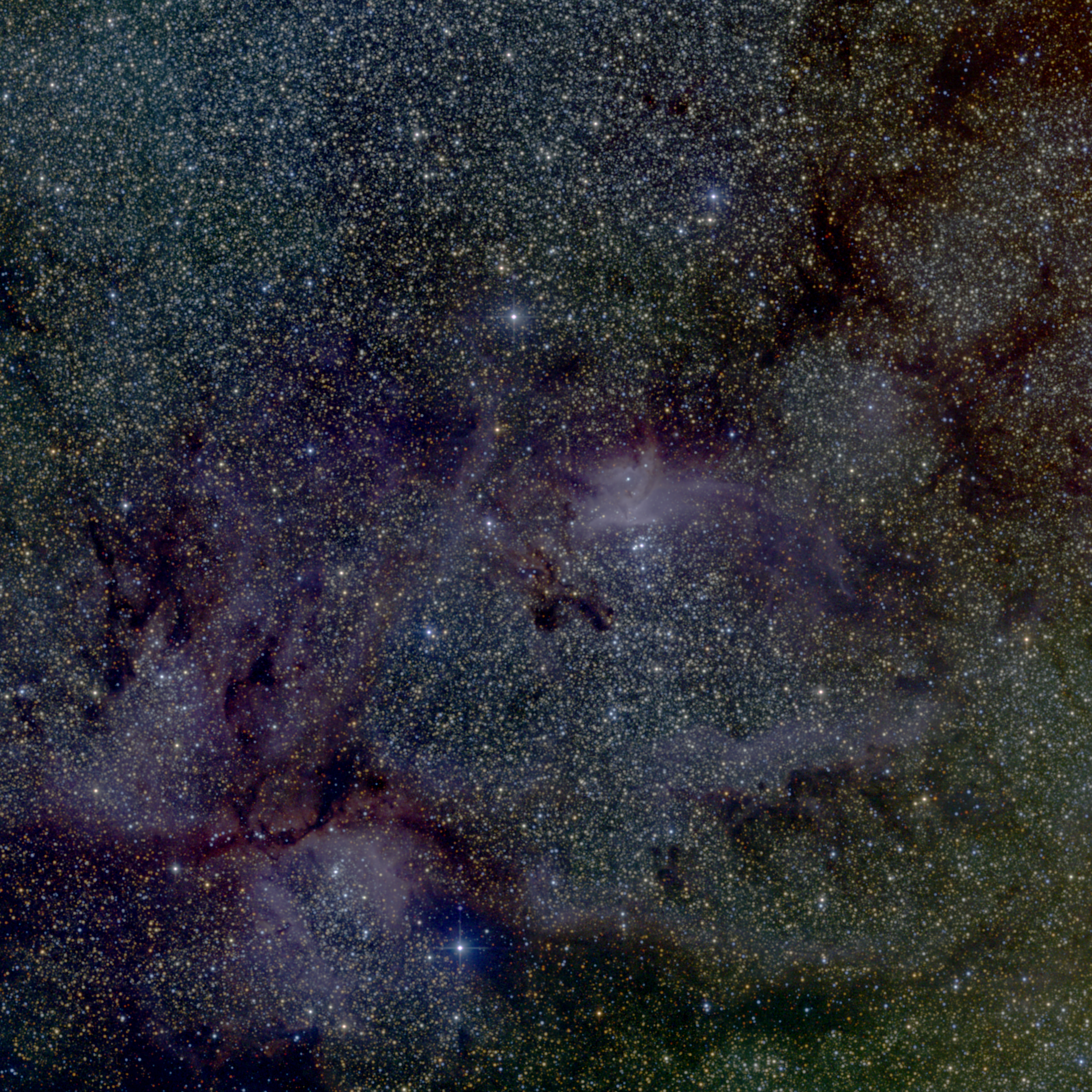

This can be corrected for, however, by targetting a transformed histogram shape that is not "equal" but something more in line with what we expect/wish the resultant image to look like. There are many such "target" histogram shapes; we will describe the one we have implemented in mViewer.Our model distribution is one where there is a low-level, random (or semi-random) population containing the majority of the pixels and a long positive tail of more unevenly-spaced (in brightness) bright pixels. The high level tail is often made up of a scattering of stars and the low level is either noise or a background field of nebulosity or densely packed dim background stars. This characterization is purely observational and anecdotal and motivates but does not drive the algorithm.

From the data histogram, we determine the mean and standard deviation of the low-level (semi-gaussian) distribution. This lets us characterize data levels in terms "sigma" values in addition to absolute data values and/or percentiles. Then rather than equalize based on a target uniform distribution, we do so based on the nominal gaussian (via the error function erf()) or a logarithmically transformed erf().

The net result is one where there are a reasonable number of high level bins showing the brightest areas/pixels, adequate detail at the low end, and adaptive flux-sensitive bins in between.

Of course, there is no free lunch. In order to steal a few more bins to show high level structure, the algorithm may have to compromise on the number available at the low end, leading to a little steppiness if taken far enough down into the noise/structure.

One of the most notable features of this approach may be the removal of the need to carefully choice the high-level cutoff. There is almost never any need to use anything but the highest data value as the algorithm maximum. Choice of the low level is still manual but can usually be chosen based on the nature of the background. If the background is all "noise" (e.g. a field of stars or galaxies) then you don't want any of the low level pixels and a minimum of "1 sigma" (one standard deviation above the mean background level) is probably best. If the "background" is all real (dense stars in the galactic plane or the LMC, maps of clouds of dust and gas, etc.) then "-2 sigma" is more appropriate. But usually slight and relatively linear adjustments to this parameter are all that are needed.

|

|

|